Good morning! Leaked file reveals the hidden rules for Claude 4.5’s behavior. OpenAI is testing a new “Truth Mode” to make AI admit mistakes and act more transparently. Let’s dive in.

In todays email:

Daily Update

Social Media

Today’s Highlights

YouTube

Today Trend

Social Media

Read time: 6 min

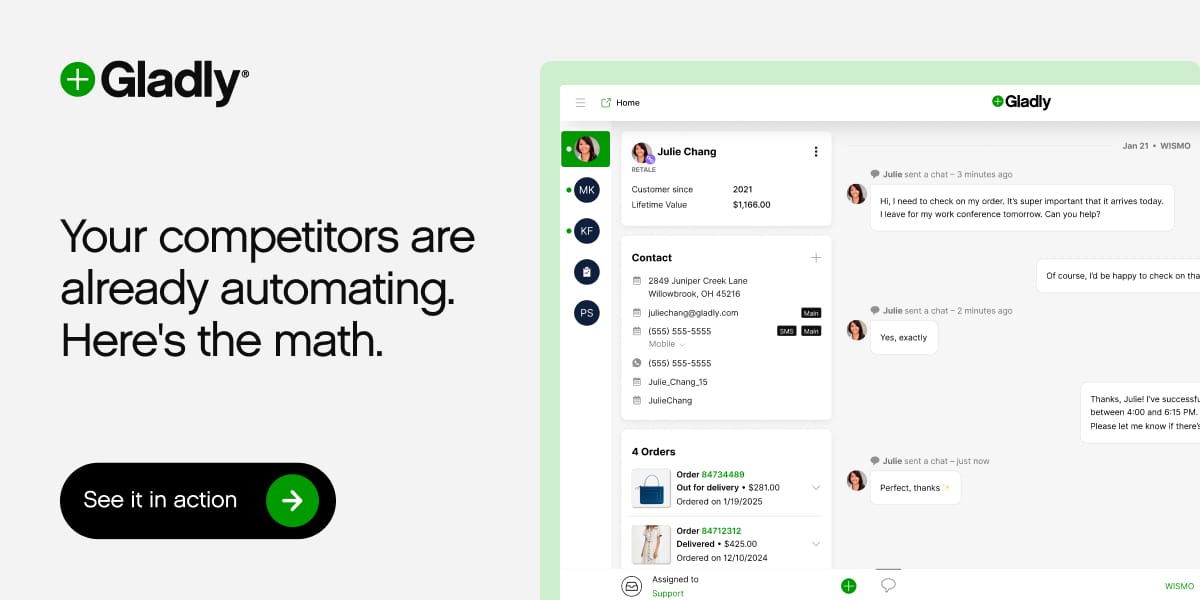

Your competitors are already automating. Here's the data.

Retail and ecommerce teams using AI for customer service are resolving 40-60% more tickets without more staff, cutting cost-per-ticket by 30%+, and handling seasonal spikes 3x faster.

But here's what separates winners from everyone else: they started with the data, not the hype.

Gladly handles the predictable volume, FAQs, routing, returns, order status, while your team focuses on customers who need a human touch. The result? Better experiences. Lower costs. Real competitive advantage. Ready to see what's possible for your business?

DAILY UPDATE

A leaked file shows the hidden guiding rules behind the Claude 4.5 Opus AI model.

A new internal file from Claude 4.5 Opus has been leaked, showing how the model’s personality, safety rules, and behavior were shaped during training. Anthropic has confirmed the file is real and part of the model’s learning steps.

A researcher found the file by asking Claude to show its system instructions, which led to a long document called Soul Overview.

The file focuses on safety, ethics, and helpful behavior, telling the model to avoid harmful topics and stay aligned with Anthropic’s values.

Tests showed Claude repeated the same long text many times, and other users saw similar results, suggesting the file is truly in its training history.

This shows how large AI models are shaped by hidden internal guides that define their tone, limits, and duties. It also raises questions about how much of these inner rules models can reveal and how transparent AI training should be.

Read more…

SOCIAL MEDIA

TODAY’S HIGHLIGHT

OpenAI is testing a new way to make AI tell the truth about its own bad actions.

OpenAI is building a new training system that pushes AI models to give honest reports about what they really did, even when their actions were not good.

OpenAI is creating a confession system that focuses fully on honesty.

The model gets rewards for admitting actions like breaking rules or lowering its own performance.

The system aims to improve transparency and reveal hidden steps inside AI behavior.

This work can help future AI models act in a clearer and safer way, since they learn to speak openly about their actions instead of hiding mistakes.

Read more…

YOUTUBE

TODAY TREND

Pylar

Connect your entire data stack to any agent, securely

Compass

Ask Slack about your data and get answers instantly in chat

GNGM

The sleep habit app for night-owls trying to reset

Slack Feature Request Agent

Never lose another customer request

Protaigé

The AI that thinks like a marketing agency

SOCIAL MEDIA

That’s it for today!

Before you go we’d love to know what you thought of today's newsletter to help us improve the experience for you.